Some consider them a technological breakthrough, some see them as a threat to humanity, and some are still unsure how to feel about them. It seems like every now and then, a new story or video emerges about someone using deepfakes. In this article, we will delve into what deepfakes are, how they impact various domains, and whether we should be concerned about our personal security due to their development.

What Are Deepfakes and How Did They Emerge?

Deepfakes are a technology for creating fake photos, videos, or audio recordings that appear to be real. This process is based on the generation of neural networks, where one piece of photo or video is superimposed onto another. Distinguishing such alterations is sometimes almost impossible.

A prominent example is a 2021 video featuring a purported Morgan Freeman discussing a “synthetic reality” where you won’t be able to discern between the real and the artificial.

The essence of deepfakes is to make your forgery go undetected. Initially, this technology was available only to a narrow group of specialists — people working in movie industry or post-production.

However, in 2017, a Reddit user uploaded pornographic material with the faces of celebrities. They were fakes but attracted attention and sparked interest in the technology. Since then, fake materials have been referred to as DeepFake, after that user’s nickname.

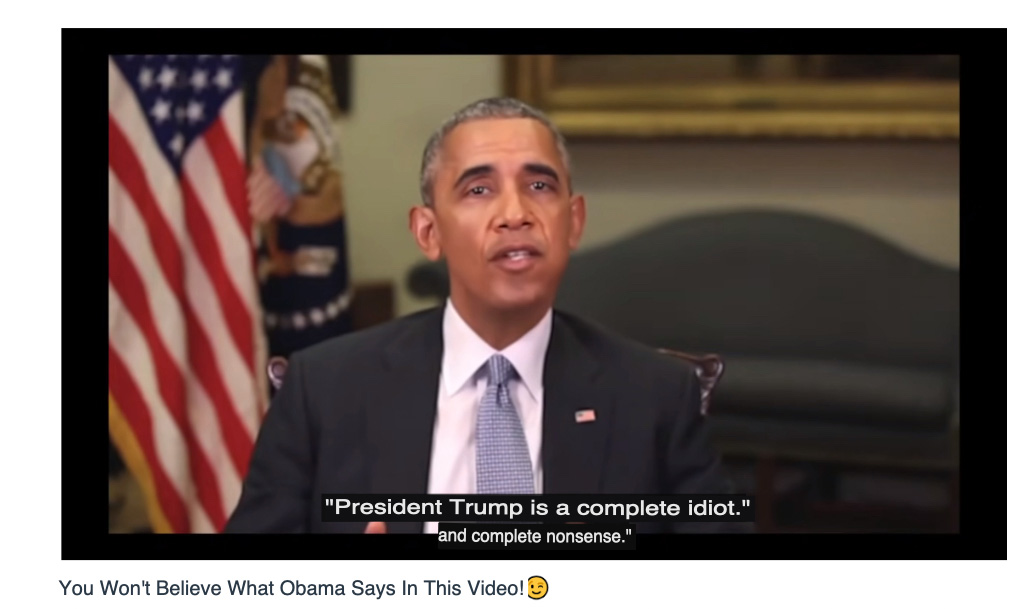

Once the technology became accessible to the general public, videos featuring celebrities, politicians, and actors began to appear online. For example, the famous video in 2018 where “Barack Obama” calls “Donald Trump” a “total and complete dipshit.” This involved not only video manipulation but also audio manipulation.

The video itself was “instructive,” much like the one with Morgan Freeman. It raises the theme of believing in what might turn out to be unreal. The video’s creator acknowledges that it’s a deepfake in the description, although in most cases, creators of such content try to conceal that fact.

| According to the World Economic Forum, the number of deepfake materials increases by 900% each year. Most people will never recognize most of this deception as it filters through tons of information they don’t even pay attention to. |

According to DeepMedia research, the number of such videos reached at least 500,000 by the end of 2023. The number could be even higher because creating fake materials is becoming easier thanks to advanced neural networks.

The process consists of several steps:

- Data collection: Initially, a large amount of images and audio recordings must be gathered for artificial intelligence training. This data can be sourced from publicly available outlets such as social media, interviews, or movies.

- Model training: Machine learning is used to create a model that analyzes and learns from the data. It attempts to identify patterns in lip movements, facial expressions, intonations, and speech to recreate them in the future.

- Fake creation: After training, the model is used to generate fake videos or audio recordings where faces, for instance, can utter phrases they never actually said.

- Refinement: Over time, the model becomes more precise and produces realistic deepfakes.

Creating fake videos is a complex technical task. In the following section, we will discuss a specific program that helps achieve this.

Inner Workings: How Deepfakes Are Created

The technology employs two algorithms – a generator and a discriminator – to create and refine fake content. The generator generates a set of training data based on the desired outcome, creating the initial fake digital content. The discriminator, on the other hand, analyzes how realistic or fake the initial version of the content has become.

This process is repeated, allowing the generator to improve the creation of realistic content, and the discriminator to become “experienced” in detecting flaws that the generator needs to correct.

Online generators can handle the task and create a basic video. However, they do it so poorly that the fake can be easily spotted without any problems. That’s why complex programs are used for high-quality content.

Although deepfake generation services have become accessible to the average user, creating your own deepfake video is still challenging. Quality work requires time:

- The more complex the mimicry and articulation of the person in the original video, the more time it will take to replace their face. And if there are people in the video with different appearances and completely different faces, the task becomes even more complicated.

- Your computer needs to meet minimum requirements – a processor with SSE instruction support, 2GB of RAM, and an OpenCL-compatible graphics card. However, there’s a risk that your hardware may crash, and even a 15-second video could take days to render.

- Combining two videos seamlessly is not currently possible. There is no program that can do this. If you want to create a good video, you’ll need to understand data structures and algorithms, and there will be many unsuccessful attempts at first.

In short, deepfakes are made by showing a computer program many videos of one person. The program learns their face, then puts it onto another video, like a digital mask. While cool, this takes a lot of time and effort, making it more complex than it seems.

There are now many possibilities for transferring an image from one video to another. Let’s explore the most common one.

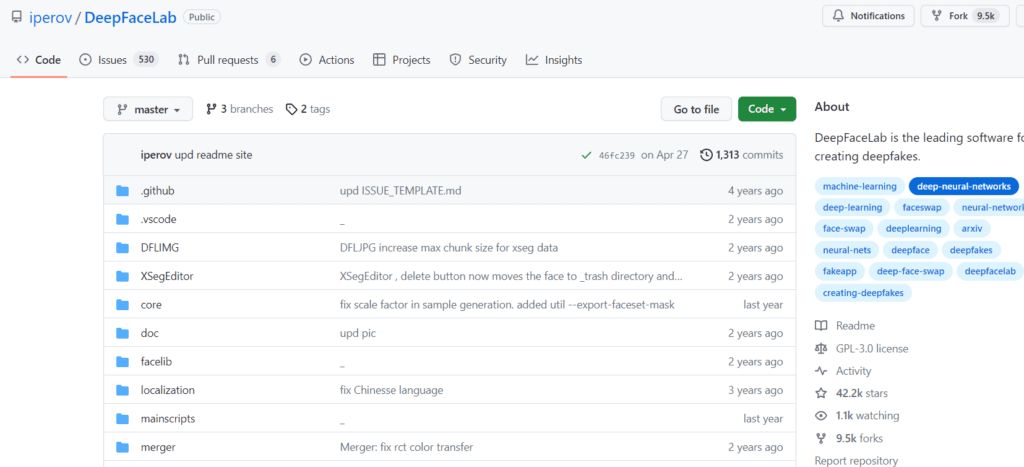

DeepFaceLab

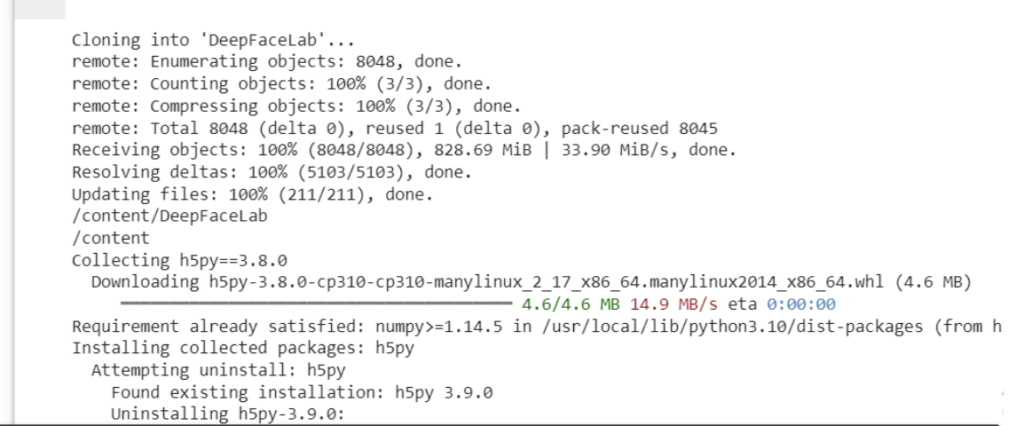

Creating your own video in the DeepFaceLab program requires some time. You can download the neural network from the official GitHub page. You can choose whether to download it for Windows or Linux.

There are several releases in the library. We will describe creating a deepfake based on Windows. To avoid downloading the archive to your computer and dealing with its extraction, you can perform all actions in Google Colab. To do this, log in to your Google account and start working in Colab.

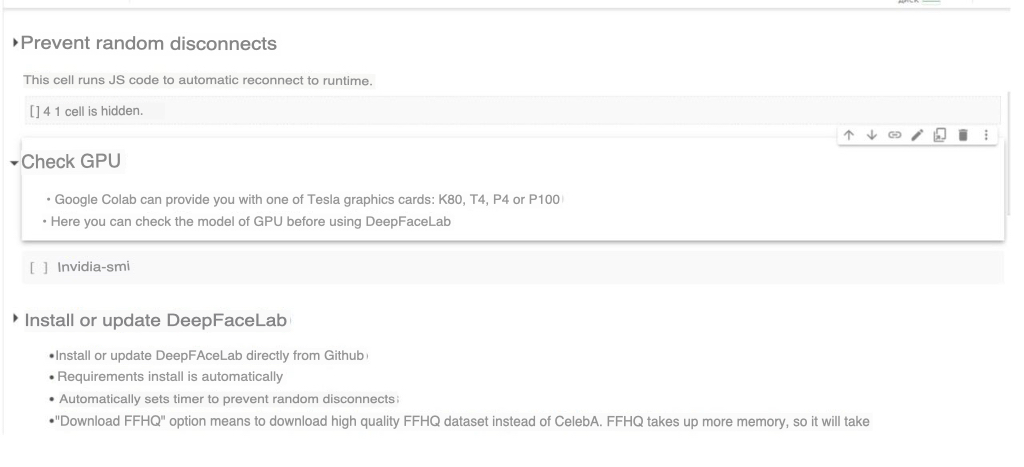

First, you need to run the “Prevent random disconnects” code to prevent your computer from suddenly restarting in case of network issues. We recommend checking which GPU model has been allocated for your session using “Check GPU.”

After checking, you need to upload the working environment by archiving the workspace directory with two files in it: data_dst.mp4 and data_src.mp4. Before that, make sure the code is compatible.

Now you can download the working environment. You will see code with information, and at the end, there will be information about a successful connection to the GPU environment.

As an example, the PC version of DeepFaceLab contains two test videos. It’s more convenient to work with videos via Google Docs if you need to collaborate with a team. Upload the archive to Google Drive, then use the “Import from Drive” code and grant access to your storage. The files will be unpacked. The initial unpacking may fail a few times, and the connection to Google Drive may stop. Don’t worry, try again – eventually, you should connect.

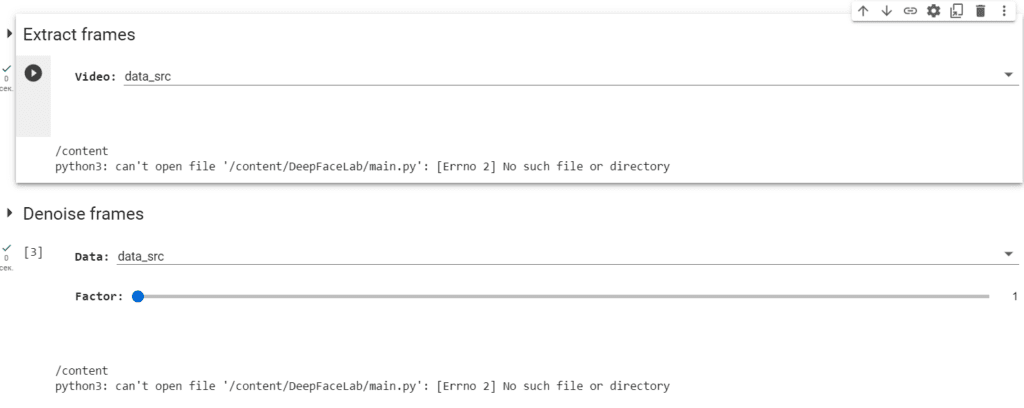

Next, run the “Extract Frames” code for data_src and data_dst.

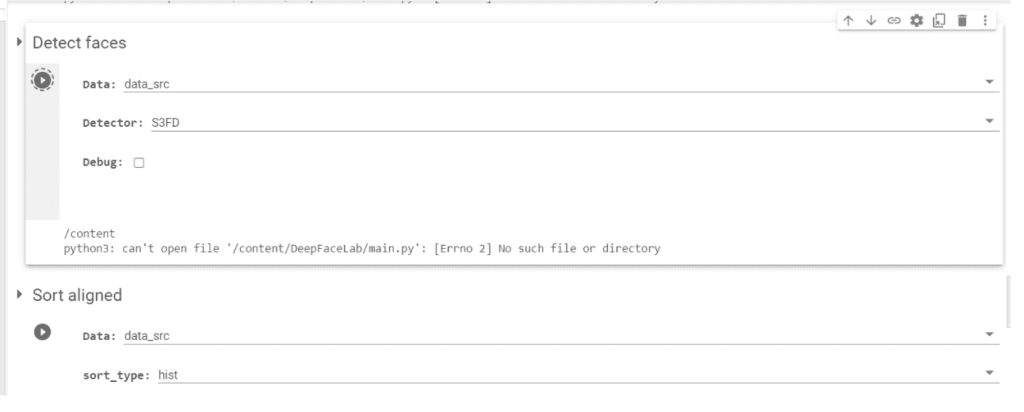

Now, let’s work with the neural network. We start with frame alignment. At this stage, the neural network finds and crops faces from each frame, all thanks to the “Detect Faces” code. First, connect it for one file, and then for the other.

Once the process is complete, all materials will appear in the workspace\data_dst\aligned and workspace\data_src\aligned directories. So, the result will be available in the “Files” tab. Next, you’ll need to remove unsuccessful frames and download all the work to your computer.

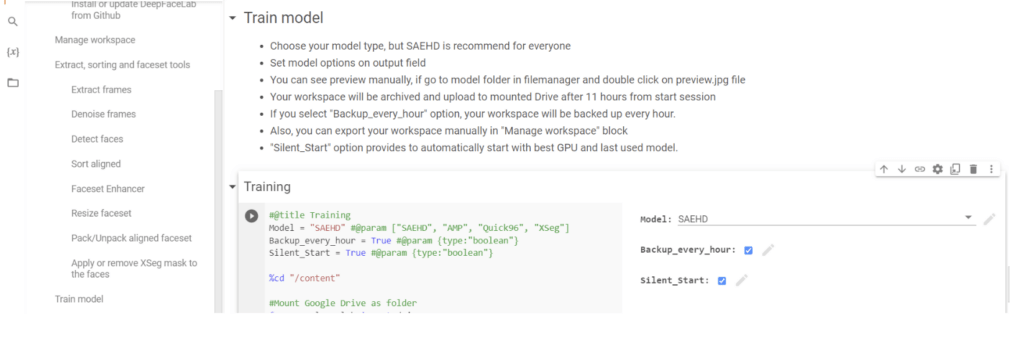

Facial transfer will involve more parameters. We move on to training.

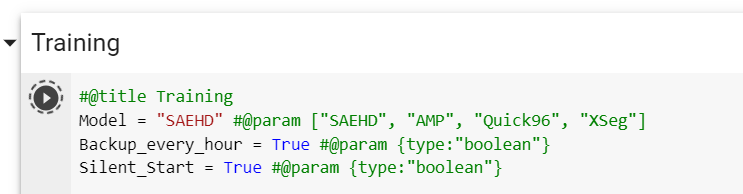

Start with the “Backup every hour” checkbox. Your working environment will be updated every hour, so you won’t have to start the project from scratch. Then, enter the model’s name, leaving the rest as default.

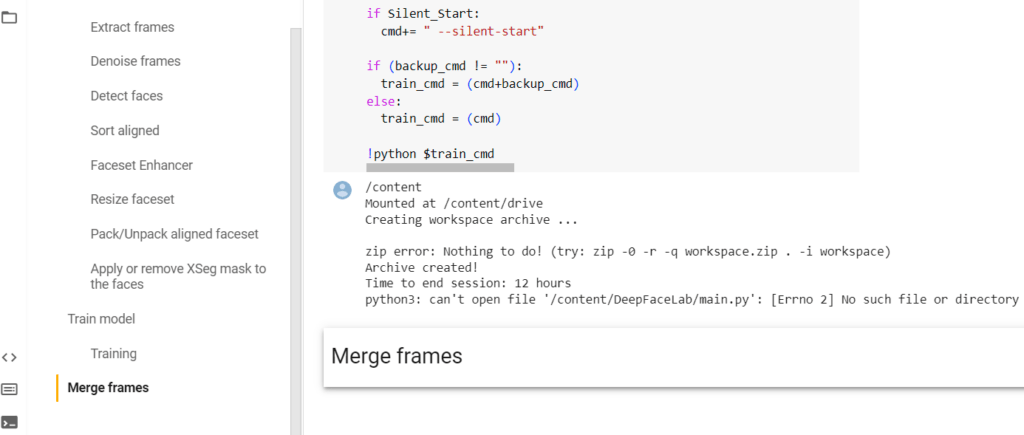

After training is complete, you should integrate the “new face” into the target video. Use the “Merge Frames” option.

When launching it, you will need to set the merging parameters. You can refer to the table below for guidance.”

| Parameter | For What |

| [1] Choose mode: | Merge Options. 0 — original — doesn’t change the face, 1 — simple overlay, 2-6 — various blending options. |

| [4] Choose mask mode: | Mask mode. |

| [0] Choose erode mask modifier (-400..400) : | Resizing the overlay mask. |

| [0] Choose blur mask modifier (0..400) : | Mask edge blur. |

| [0] Choose motion blur power (0..100) : | Motion blur. The more blurred areas in the picture, the higher this parameter. |

| [idt] Color transfer to predicted face (rct/lct/mkl/mkl-m/idt/idt-m/sot-m/mix-m) : | Selection of the skin color matching function between the source and target. |

In the end, you just need to run the ‘Get result video’ code. Everything will be saved in a single file on your Google Drive. A detailed guide for all the steps can be found here.

| The drawback of this program is that to fine-tune the result to its maximum, you’ll have to do it all manually and delve deeper into the data, adjusting the merge parameters during the training phase. |

There are other programs that assist in creating deepfakes. Some of the most popular ones include:

- Faceswap — a free, comprehensive program for creating videos that require training. Training can take up to 2-3 weeks, during which various videos with different faces are uploaded. The program’s interface is complex and is suitable for advanced users familiar with working with neural networks.

- Deepfakes web — a paid program that can swap faces in just an hour. The more videos you upload, the better the final result. The cost for one hour is $2.

- Visper — a neural network developed by Sberbank, more suitable for creating simple videos, as face swapping is not available here.

There are also applications that quickly transfer a face from one object to another, but such tricks are easily noticeable.

Personal Security: How Deepfakes Help with Verification

From 2022 to the first quarter of 2023, fraud involving deepfakes has been on the rise. During this time, the number of fake videos, compared to all other forms of fraud, has increased by 1200% in the United States, 4500% in Canada, and 392% in the United Kingdom. In other words, no part of the world is immune to this technology.

Most often, fraud is associated with creating fake accounts, passports, or documents. For example, such substitution in China, using deepfakes, led to a major fraud against the tax system in 2021.

With the help of deepfake technology, cybercriminals can use this method to gain access to previously inaccessible or protected accounts.

Vulnerable industries include sectors that work and interact with customers remotely. These include fintech, online gambling, and cryptocurrency platforms. Companies ranging from banks to cryptocurrency exchanges are turning to image and video-based verification.

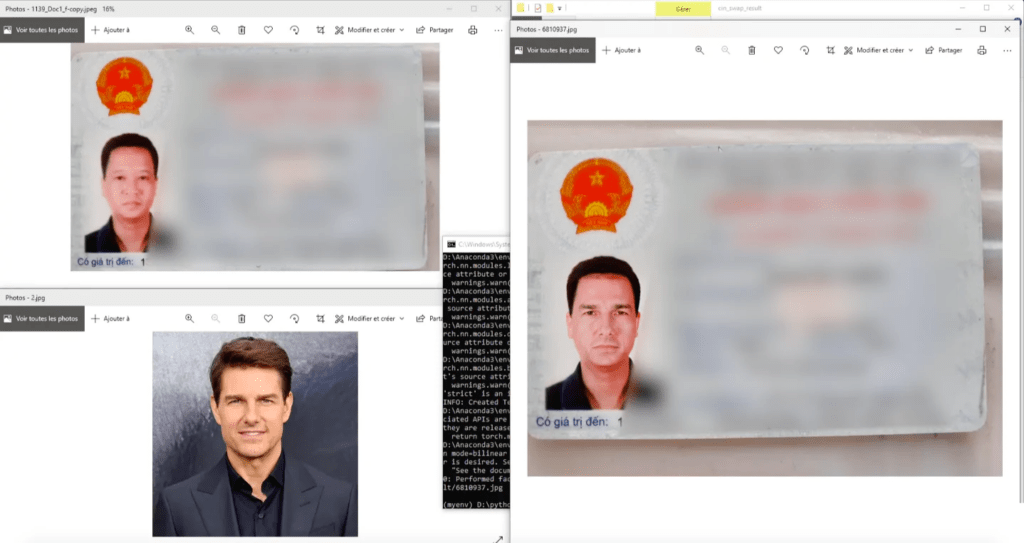

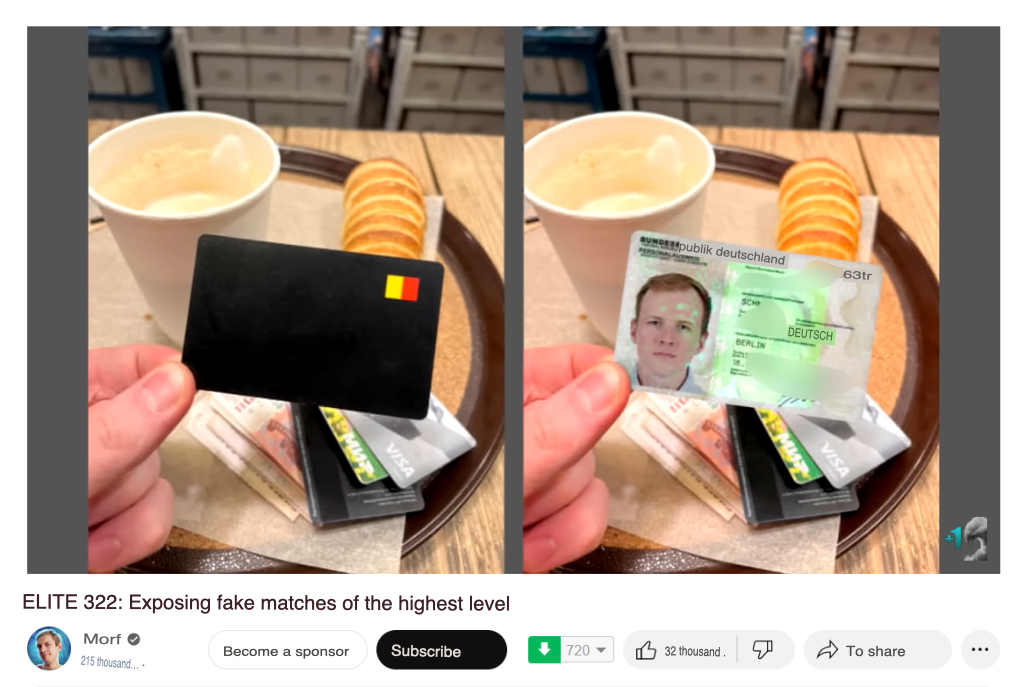

Most of these companies require users to upload official identification, selfies, or special photos based on instructions such as raising fingers or holding a note. Deepfake technologies allow bypassing these checks. For example, some bloggers recently exposed fake matches and also mentioned the deception involving passing verification checks with bookmakers.

This will significantly simplify the work for professionals in bookmakers: with such technology, there’s no need to be in constant contact with a dropshipping partner or the dropshipper themselves.

| The problem with this method of passing verification is that bookmakers can always request additional documents to ensure the person’s authenticity. |

Currently, high-quality face replacement technology in photos and videos is complex. There are applications that replace faces, but this deception is evident. Therefore, they are not suitable for passing verification. Dealing with a neural network for weeks, training it to properly change faces, using code to modify parameters, and finding a large number of photos or videos of the person whose face needs to be used is the challenge.

If this technology becomes popular among players and is easy to implement, passing verification could become much easier than it is now. Even now, not only deepfake technologies but also the neural networks for their detection are constantly improving.

Some of the companies leading the fight against deepfakes include:

- Sentinel offers cloud-based real-time deepfake detection.

- Intel’s FakeCatcher is a tool designed to identify deepfakes in real-time.

By implementing these detection tools, companies can add an extra layer of security to their verification processes.

Addressing the Ethical Dilemma

Deepfake technology raises complex ethical issues. The ability to create manipulated videos that appear genuine can be used to damage reputations, spread misinformation, and erode trust in the media and public figures. To address these concerns, several ongoing efforts are underway:

- Legislative Efforts: Governments worldwide are exploring legislation to regulate the creation and use of deepfakes. These laws might require creators to label deepfakes or hold them accountable for their misuse.

- Industry Standards: Tech companies and media organizations are working together to develop ethical guidelines and best practices for responsible deepfake creation and use.

- Public Awareness Campaigns: Educating the public on how to identify deepfakes and critically analyze online content is crucial for minimizing their negative impact.

What does the law say about deepfakes?

In many countries, deepfakes are legal, and law enforcement agencies can do little about them despite the serious threats they pose. Fake content becomes illegal only when it violates existing laws, such as child pornography, defamation, or incitement to hatred.

If the author specifies that it is fake, then there will be no legal actions taken against them by law enforcement. Even if the video is offensive, using someone’s likeness for entertainment purposes does not infringe on copyright or harm their reputation.

So, people cannot file a complaint or lawsuit if their likeness or creativity is used in fake content.

However, the lack of specific deepfake regulations doesn’t mean the issue isn’t being addressed. Here are some ongoing efforts:

- Deepfake detection methods: Researchers and tech companies are actively developing tools to identify deepfakes. These tools analyze factors like facial movements, lip-syncing, and lighting inconsistencies to flag suspicious content.

- Potential future legislation: Several countries, including the United States, are considering legislation to address deepfakes. These laws might focus on requiring creators to label deepfakes as such or holding them liable for the misuse of deepfakes to cause harm.

Where else are deepfakes used?

Deepfakes are primarily associated with fraud or pornography, but there are many areas where fake images or videos can be encountered, including:

- Marketing. Brands and companies use fake videos to promote their services. These videos often overlay the faces of celebrities.

- Education. In 2019, a university in the USA used deepfake technology to create a virtual lecture by a renowned deceased scientist. The goal was to provide students access to historical knowledge and the ideas of eminent figures.

- Journalism. News outlets use such videos to create simulated interviews with controversial figures, allowing reporters to ask questions and receive answers without the need for actual interaction.

- Film Production. Filmmaking is a costly process involving camera rentals, studios, and actor fees. Sometimes, deepfake technology can help assign a specific age rating to a film. For example, the creators of the thriller film ‘Youth’ used deepfakes to release the movie with a lower age rating. Re-shooting such a film would be expensive, so it’s easier to use neural networks for editing dialogue.

- Virtual Reality. In 2019, a gaming company used Deep Fake technology to create hyper-realistic characters and environments in a virtual reality game, providing players with an immersive experience.

- Politics. Political deepfakes are some of the most intriguing and high-quality ones. They are used to manipulate public opinion, undermine the reputation of political opponents, or challenge their political positions. For instance, there is a video where Donald Trump “concedes defeat” two months after the elections. Upon closer examination, one can see that the video is fake, but many people won’t scrutinize it closely.

Fake content entertains us, but at the same time, it has become a powerful tool for fraud and cybercrimes. For example, in 2020, a group of criminals used deepfakes to deceive a British energy company of £40,000 by posing as the CEO in a series of emails. Such cases are on the rise.

Cybersecurity is also at risk, as deepfake technology increases the efficiency of phishing attacks and simplifies fraudulent operations related to corporate reputation.

Are you interested in the unlimited possibilities of artificial intelligence? You can find out more about it on our selection. See our articles on AI tools and how they help in affiliate marketing!

How to Spot Deepfakes?

Here are some tips for readers on how to spot deepfakes:

- Look closely at faces: Pay attention to details like unnatural skin texture, inconsistencies in lighting or shadows around the face, and awkward blinking or lip-syncing. Deepfakes sometimes struggle to perfectly capture these subtleties.

- Listen for audio inconsistencies: Does the audio sound off-sync with the speaker’s movements? Are there any strange background noises or glitches? Deepfakes may struggle to seamlessly integrate audio and video.

- Check the source: Where did you see this video or image? Is it from a reputable source known for fact-checking? Be wary of unfamiliar websites or social media accounts.

- Do a reverse image search: Use online tools like Google Images or TinEye to see if the same image or video appears elsewhere online, especially on credible websites.

- Be skeptical of extreme claims: If something seems too good (or bad) to be true, it probably is. Deepfakes are often used to create sensational content, so approach extreme claims with a critical eye.

By following these tips, you can become more aware of potential deepfakes and be more critical of the information you consume online. Remember, a healthy dose of skepticism is key in today’s digital age.

Conclusion

Deepfakes represent a powerful means of creating video and audio content that can be almost indistinguishable from reality. Their potential for entertainment, education, research, and security is enormous.

Although they have become accessible to all internet users, few can actually use this technology for their purposes. Creating high-quality fake content may take several days, or sometimes even weeks, to train the neural network to process videos correctly and make seamless face replacements. And not everyone is willing to invest the time and effort into this. Also, we would like to mention that deepfakes pose a complex ethical dilemma. The ability to create realistic, manipulated videos raises serious privacy concerns.